We’ve officially entered the “production phase” of Artificial Intelligence. In just a few short years, AI has moved from experimental pilots to being embedded in nearly every major business function—by some estimates, used regularly by 88% of organizations. But there is a growing, uncomfortable truth in boardrooms today: many leaders cannot actually explain how their AI models arrive at specific conclusions.

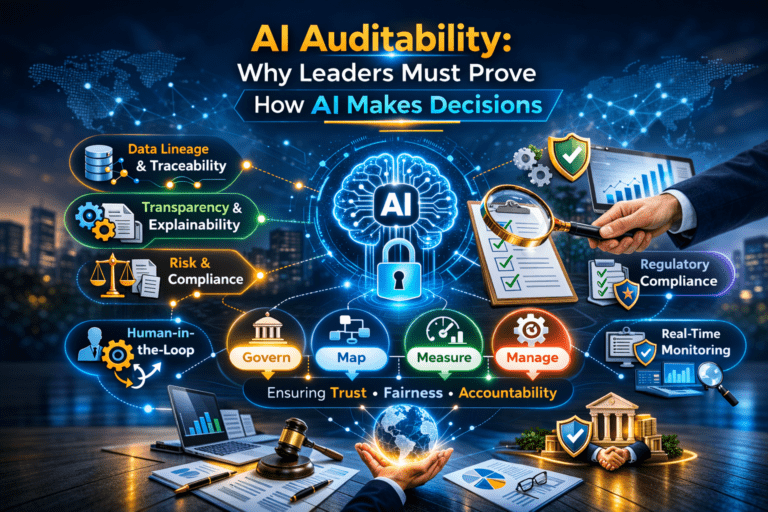

This “black box” problem is no longer just a technical headache; it is a top-tier governance risk. As AI evolves from simple assistants to “agentic” systems that can act autonomously, the pressure for leaders to prove how decisions are made—a concept known as AI Auditability—has become a business and regulatory imperative.

It is easy to confuse auditability with transparency or explainability, but they aren’t the same.

Think of it as a definitive “black box recorder” for your business. It provides the evidence—through logs, data lineage, and documented processes—that allows a third party to probe and verify that your AI is behaving as intended.

Why can’t leaders just “trust the tech”? Because when AI fails, the accountability doesn’t dissipate into the software; it remains within the chain of governance. We have already seen the fallout when systems are not auditable:

Corporate Liability: In India, Section 166 of the Companies Act imposes a duty of care on directors that includes oversight of algorithmic reliability. Similarly, the EU AI Act now mandates strict transparency and provision of information for “high-risk” systems.

Auditability doesn’t happen by accident; it must be designed into the development process. Leading organizations are moving toward continuous auditing—real-time monitoring instead of periodic “spot checks”—to catch algorithmic drift or bias before it causes harm.

Here is how to get started:

Prioritize Transparency for High-Risk Cases: If your AI handles recruitment, credit scoring, or healthcare, the EU AI Act requires you to provide clear instructions for use, including the level of accuracy and potential risks to fundamental rights.

Mature governance isn’t a handbrake on innovation; it’s the seatbelt that lets you go faster. Leaders who can prove their AI is fair, secure, and accurate will win the trust of regulators, investors, and customers.

The window for “wait and see” is closing. With the EU AI Act beginning enforcement and the FTC issuing compulsory orders for audit documentation, the era of the unverified algorithm is over. It’s time to move from hoping the AI works to verifying that it does.