In January 2024, a Russian state actor walked into Microsoft’s corporate email. The front door wasn’t a zero-day. It was a legacy test OAuth application in a non-production tenant — a forgotten non-human identity with elevated rights and no MFA. The attacker password-sprayed in, hijacked the app, and quietly read mailboxes belonging to senior leadership, cybersecurity, and legal teams.

That breach is now the canonical example of why AI agent identity management is the security control plane of 2026. Replace “legacy OAuth app” with “autonomous AI agent given a shared admin token to query Salesforce,” and you have the same incident, scaled across every Fortune 500 procurement deck.

The economics are no longer debatable. Gartner placed “Agentic AI Demands Cybersecurity Oversight” and “Identity and Access Management Adapts to AI Agents” at the top of its 2026 cybersecurity trends list. IBM’s 2025 Cost of a Data Breach Report found that 13% of organizations suffered a breach of an AI model or application — and 97% of those organizations lacked proper AI access controls.

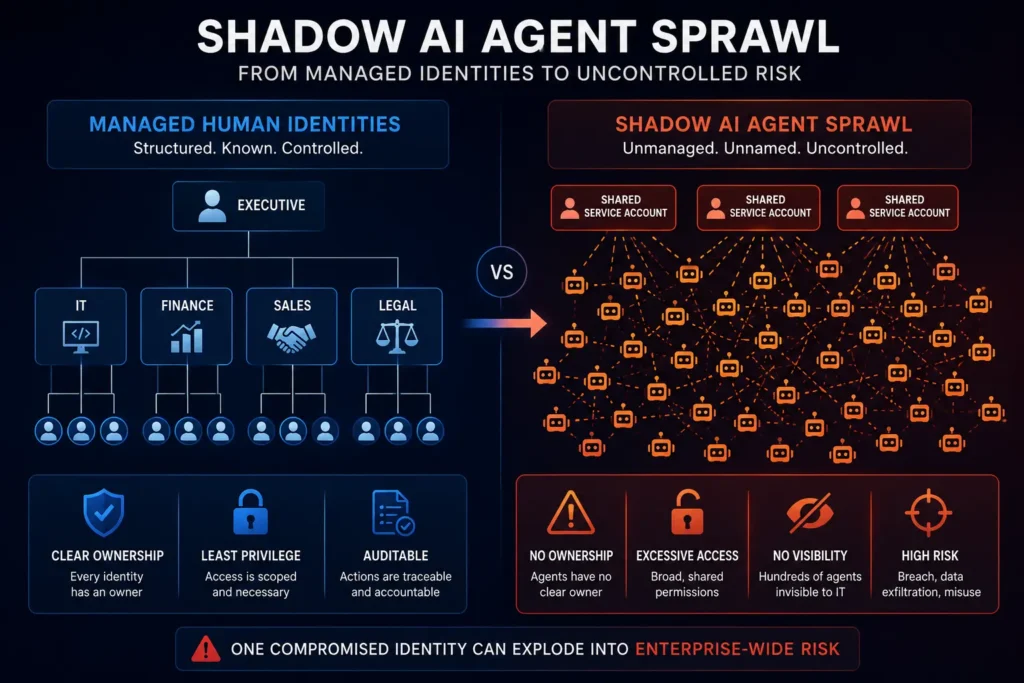

Most identity programs were not built for entities that decide, at runtime, what to do next.

Here’s what you’ll get in this playbook: a named framework (AGENT), the standards that auditors and enterprise buyers expect you to cite, a procurement question kit, and a 30/60/90 roadmap your engineering team can actually ship.

The cost shows up in three places: breaches, blocked deals, and burned engineering cycles.

Breaches first. IBM’s 2025 report puts the global average breach cost at $4.44 million and the U.S. average at a record $10.22 million. Of organizations that experienced an AI-related incident, 60% saw data compromised and 31% had operations disrupted. One in five organizations reported a breach traced to shadow AI — adding an average $670,000 to total breach cost.

Then the deal cycle. Enterprise procurement teams now embed AI-agent questions in vendor reviews: Who owns this agent? What identity does it run under? Can you produce an audit trail of every action it took on our data? If you answer with “it uses our platform’s main API key,” that deal slows down — or stops.

Finally, engineering drag. Verizon’s 2025 DBIR reports that third-party involvement in breaches doubled year-over-year from 15% to 30%, and 22% of breaches began with credential abuse. Median time to remediate a leaked secret in a GitHub repo: 94 days. GitGuardian counted roughly 29 million new secrets pushed to public GitHub in 2025, with AI-service credential leaks up 81% year-over-year.

The personas hit hardest are predictable. Founders and CTOs at Series A–C AI startups lose enterprise deals because they cannot answer agent-governance questions. CISOs at SaaS scale-ups inherit dozens of agents nobody inventoried. Engineering leaders burn sprints rotating credentials and chasing audit findings instead of shipping product.

It is getting worse because agent deployment is accelerating. Gartner predicts 40% of enterprise applications will include task-specific AI agents by the end of 2026, up from less than 5% in 2025. Identity tooling did not grow at that rate.

Most teams still classify AI agents as service accounts. That is the root cause of most agent breaches we see.

A service account is static: predictable scope, predictable behavior, predictable callers. An AI agent is dynamic: it decides at runtime what tool to call, what data to read, and what action to take — and a successful prompt injection can rewrite its intent mid-session. The OWASP Top 10 for LLM Applications 2025 named this exact failure mode LLM06: Excessive Agency — caused by excessive functionality, excessive permissions, or excessive autonomy.

Then OWASP went further. On December 9, 2025, the OWASP GenAI Security Project released the OWASP Top 10 for Agentic Applications 2026. ASI03 — Identity and Privilege Abuse sits in the top three, alongside Agent Goal Hijack and Tool Misuse.

The takeaway: AI agents are a new identity class between human users and traditional non-human identities. They need their own primitives.

After consulting with AI-native SaaS teams preparing for SOC 2, ISO 42001, and enterprise procurement, SecureFLO developed the AGENT framework — five controls every agent in production should pass.

| Letter | Control | What it means |

|---|---|---|

| A | Attest | Every agent has a unique, cryptographically verifiable identity tied to a human owner |

| G | Grant | Credentials are just-in-time, short-lived, and scoped per task — never shared |

| E | Enclose | Agents run inside a sandbox with explicit allow-lists on tools, data, and outbound calls |

| N | Notarize | Every action is logged with a delegation chain attributable to a human principal |

| T | Terminate | Agents are deprovisioned automatically at end of lifecycle — no zombie identities |

Run an agent through AGENT before it touches production. If any letter fails, do not deploy.

Treat agents like non-human employees. Each one needs a unique identity, an accountable human owner, a documented purpose, and an expiration date.

The open standard for this is SPIFFE (Secure Production Identity Framework for Everyone), a CNCF-graduated project. SPIFFE issues each workload a SPIFFE Verifiable Identity Document (SVID) — either an X.509 certificate for mTLS or a JWT — derived from cryptographic node and workload attestation. No pre-shared secret required.

NIST SP 800-207 (Zero Trust Architecture) explicitly contemplates this in Section 5.7, which addresses non-person entities (NPEs) in ZTA administration. Its companion document, NIST SP 800-207A, references SPIFFE as a service-mesh identity primitive.

The contrarian implication: stop reusing the platform’s main API key across agents. If three agents share one credential and one gets hijacked, you have lost attribution for all three.

Long-lived static secrets are the most reliable initial access vector in the modern enterprise. Verizon’s DBIR found 22% of breaches start with credential abuse. The Snowflake supply-chain incident of 2024 hit ~165 customer organizations through credentials that had not been rotated for years and lacked MFA.

For agents, the right primitive is OAuth 2.0 Token Exchange (RFC 8693) — a standards-track IETF protocol that issues short-lived, audience-bound tokens for delegated actions. When an agent acts on a user’s behalf, it carries an act claim identifying itself as the actor, while the subject_token identifies the user. The downstream API sees the full delegation chain.

This is the pattern Microsoft, Okta, and AWS all converged on for agent-to-tool calls. Combine it with workload identity federation for cloud APIs and you eliminate static cloud credentials entirely.

Mini-scenario. A Series B fintech deploys a customer-support agent that can issue partial refunds. Pre-AGENT: the agent uses the support team’s admin API key, scoped to “all customer accounts.” Post-AGENT: the agent receives a token via RFC 8693 scoped to a single customer ID, expiring in 60 seconds, with the support rep’s identity preserved in the act chain. A prompt injection attempt against this agent maxes out at one refund on one account — not a database dump.

Newly deployed agents are the most dangerous. They have permissions but no track record.

The CSA-Oasis State of NHI and AI Security 2026 found that 51% of organizations cite over-permissioned access as a top NHI pain point, and 78% have no documented policy for creating or removing AI identities.

Sandboxing reduces blast radius through three layers:

The OWASP Top 10 for LLM Applications 2025 specifically warns under LLM07 — System Prompt Leakage that system prompts are not security controls. Sandbox boundaries enforced at the infrastructure level are.

Auditors and enterprise buyers ask the same question: “Who authorized this?”

If your agent action log says only agent-prod-7 executed query, you fail. The log needs the full delegation chain: which user initiated the workflow, which agent picked up the task, what tool was invoked, what data was returned. RFC 8693’s act claim was designed for exactly this.

Mini-scenario. A scale-up serving healthcare customers fields a HIPAA audit question: “Show us every PHI record an AI agent touched on behalf of practitioner Smith last quarter.” Pre-AGENT: impossible — the agent logged its own actions but lost the practitioner mapping. Post-AGENT: a single query, exportable as audit evidence.

Most agent breaches we triage involve an identity that should have been deleted months ago. The Internet Archive breach traced back to a GitLab token exposed in a configuration file since December 2022 — never rotated, never revoked. The Microsoft Midnight Blizzard pivot used a legacy non-production test OAuth app.

Every agent identity needs an automated end-of-life trigger: project closed, owner left, ticket resolved, deployment retired. The CSA-Oasis 2026 survey found only 14% of organizations have fully automated AI identity creation and removal. That is the number that has to move.

This is where most blog posts stop. We map AGENT to the controls your auditor and your enterprise customer will name explicitly.

| AGENT control | SOC 2 | ISO 27001:2022 | ISO 42001:2023 | NIST AI RMF | OWASP Agentic 2026 |

|---|---|---|---|---|---|

| Attest | CC6.1 | A.5.16 Identity mgmt | A.6 AI lifecycle | MAP-3 | ASI03 |

| Grant | CC6.2 / CC6.3 | A.5.17 / A.8.5 | A.6.2.2 | MANAGE-2 | ASI03 |

| Enclose | CC6.6 | A.8.3 / A.8.22 | A.6.2.5 | MANAGE-1 | ASI02 / ASI04 |

| Notarize | CC7.2 | A.8.15 logging | A.6.2.6 | MEASURE-2 | ASI09 |

| Terminate | CC6.3 | A.5.18 | A.6.2.3 | GOVERN-1.5 | ASI10 |

ISO/IEC 42001:2023, published December 2023, is the first certifiable AI management system standard. Microsoft, AWS, and Google Cloud are all certified. If you sell AI-enabled software into regulated industries in 2026, expect prospects to ask for it.

The EU AI Act’s high-risk obligations become fully applicable on August 2, 2026 (a Commission-proposed Digital Omnibus to delay this to December 2027 received a Council-Parliament political agreement on May 7, 2026 but is not yet formally adopted). Autonomous agents used in employment, financial services, healthcare, and other Annex III contexts fall in scope.

Three patterns reliably fail audits and procurement reviews:

These are the AI-agent questions our clients see in 2026 procurement reviews. Have answers ready:

If you cannot answer four of these crisply, your enterprise deal is at risk.

Here’s how we approach AI agent identity management with our clients.

We start where you are. For a Series A AI startup with eight engineers and no security hire, the first sprint is inventory, ownership assignment, and ripping out shared API keys. For a Series C scale-up preparing for ISO 42001 certification, we focus on policy, attestation architecture, and audit-evidence pipelines. In both cases the goal is the same: pass enterprise security review without slowing the roadmap.

We bring the framework, the evidence templates, and an outside-perspective security voice your customers trust. Most of our clients use us as a fractional security partner rather than building the function in-house at Series B prices.

Relevant capabilities:

Book a 30-minute AI agent identity review. We’ll walk your agent inventory, identify the top three failure patterns, and leave you with a written remediation plan — no obligation. Schedule with our team →

Here’s how the four layers cover a typical 312-question enterprise security questionnaire with a 40-question AI module:

| Question Category | Volume | Trust Stack Layer |

|---|---|---|

| Information security policies & governance | ~40 questions | Layer 1 (SOC 2 / ISO 27001) |

| Access control & identity management | ~35 questions | Layer 1 + Layer 3 |

| Data protection & encryption | ~30 questions | Layer 1 + Layer 3 |

| AI governance & model risk | ~40 questions | Layer 2 (ISO 42001 / NIST AI RMF) |

| Application security & secure development | ~45 questions | Layer 3 |

| Cloud & infrastructure security | ~30 questions | Layer 3 |

| Incident response & business continuity | ~30 questions | Layer 4 |

| Vendor & third-party risk | ~25 questions | Layer 1 + Layer 4 |

| Privacy & regulatory compliance | ~25 questions | Layer 1 |

| Continuous monitoring & assurance | ~12 questions | Layer 4 |

When the stack is built, the questionnaire stops being an interrogation and becomes a recital. Your team isn’t researching answers — they’re attaching pre-existing evidence.

Here’s how we approach AI agent identity management with our clients.

We start where you are. For a Series A AI startup with eight engineers and no security hire, the first sprint is inventory, ownership assignment, and ripping out shared API keys. For a Series C scale-up preparing for ISO 42001 certification, we focus on policy, attestation architecture, and audit-evidence pipelines. In both cases the goal is the same: pass enterprise security review without slowing the roadmap.

We bring the framework, the evidence templates, and an outside-perspective security voice your customers trust. Most of our clients use us as a fractional security partner rather than building the function in-house at Series B prices.

Relevant capabilities:

Book a 30-minute AI agent identity review. We’ll walk your agent inventory, identify the top three failure patterns, and leave you with a written remediation plan — no obligation. Schedule with our team →

Security work translates to revenue when you frame it correctly.

| Metric | Before AGENT | After AGENT |

|---|---|---|

| Enterprise deal cycle | 9–14 months stalled at security review | 30–45 days cleared with prepared evidence |

| Audit pass rate (SOC 2 / ISO 42001) | Findings under CC6, A.5.16, A.5.18 | Zero identity-related qualifications |

| Engineering time on credential rotation | 1 sprint per quarter | Continuous, automated |

| Cyber insurance renewal | Premium increases, exclusions added | Stable terms, AI coverage retained |

| Breach exposure | $4.44M global average (IBM 2025) | Sandbox limits blast radius; lower expected loss |

The board-level translation: agent identity is a revenue function. Companies that close it move faster through enterprise procurement. Companies that ignore it lose deals to competitors who didn’t.

Days 1–30 — Inventory and stop the bleeding. Discover every agent in production. Assign a human owner to each. Rotate or revoke any shared API keys. Deploy a secrets scanner to your repos.

Days 31–60 — Grant and enclose. Stand up per-agent identity via SPIFFE or your cloud provider’s workload identity. Migrate agent-to-tool calls to OAuth Token Exchange with the act claim. Define tool allow-lists per agent.

Days 61–90 — Notarize and terminate. Wire delegation-chain logging into your SIEM. Define deprovisioning triggers. Run a tabletop on “compromised agent” and measure blast radius. Map controls to SOC 2, ISO 42001, and OWASP Agentic Top 10.

What is AI agent identity management?

AI agent identity management assigns each autonomous AI agent a unique, cryptographically verifiable identity with scoped permissions, just-in-time credentials, sandboxed execution, and a defined lifecycle. It treats agents as a distinct identity class — separate from human users and static service accounts — because agents choose actions at runtime and need attribution, scoping, and runtime governance.

Are AI agents the same as non-human identities (NHIs)?

AI agents are a subclass of NHIs, but they require stricter controls. Unlike service accounts or API keys, agents decide at runtime, chain tool calls, and can be steered by prompt injection. That autonomy demands per-task credentials, behavioral monitoring, and delegation-chain auditing that legacy NHI tooling does not provide.

How do you authenticate an AI agent?

Use cryptographic workload identity — such as SPIFFE/SPIRE-issued SVIDs or OIDC-federated tokens — instead of shared API keys. For multi-step calls on a user’s behalf, use OAuth 2.0 Token Exchange (RFC 8693) with the act claim to preserve the delegation chain. Eliminate long-lived static secrets entirely.

What compliance standards apply to AI agents in 2026?

SOC 2 CC6 controls, ISO/IEC 27001:2022 Annex A, ISO/IEC 42001:2023, NIST AI RMF, NIST SP 800-207 Zero Trust, and the OWASP Top 10 for Agentic Applications 2026 all apply. The EU AI Act’s high-risk obligations also affect agents in regulated decision-making contexts beginning August 2026.

What is the biggest mistake teams make when deploying AI agents?

Reusing a shared service account or admin API key across multiple agents. This collapses attribution, breaks least privilege, and amplifies blast radius if one agent is hijacked. IBM’s 2025 report found 97% of organizations breached through AI lacked proper AI access controls. Per-agent identity is the foundational fix.

How does AI agent identity management affect enterprise sales?

Enterprise security questionnaires in 2026 increasingly ask how AI agents are identified, scoped, and audited. Vendors that can demonstrate per-agent identity, JIT credentials, and OWASP-aligned controls move through procurement faster. Weak agent governance now blocks deals the way missing SOC 2 reports did three years ago.

AI agents are the fastest-growing identity class in your environment, and most are still authenticating with credentials that should have been deleted last year. The frameworks, standards, and tooling now exist — AGENT, SPIFFE, RFC 8693, ISO 42001, OWASP Agentic Top 10 — to do this properly. Your next enterprise deal, audit, and insurance renewal will measure whether you’ve used them. Book your AI agent identity review with SecureFLO this week → Schedule now.

Free assessment from Secureflo. Calibrated to your industry, country, and stack. Get immediate visibility into your institutional grade reliability.