AI Security Questionnaires: Why Most Startups Fail (And the Trust Stack That Fixes It)

It’s Monday. Your enterprise prospect just sent a 312-question security questionnaire. Forty of those questions are about AI — model bias, training data lineage, ISO 42001, NIST AI RMF. Your Series B closes in six weeks.

You don’t have answers.

You’re not alone. The average enterprise now sends out 500+ security questionnaires a year, and AI-specific sections are the fastest-growing category. The cost of getting them wrong isn’t a failed audit — it’s a stalled deal, a slipped quarter, and a CFO who starts asking why ARR forecasts keep moving.

This is the playbook for getting through them. Not by answering faster. By being the kind of company that doesn’t have to scramble.

Here’s what you’ll get: a 4-layer AI Trust Stack framework that maps every common AI security questionnaire question to a control you can build before the questionnaire arrives — so security review becomes a 5-day rubber stamp, not a 6-week deal-killer.

Why AI Security Questionnaires Are Costing You Right Now

Most founders see security questionnaires as a sales tax. They’re not. They’re a revenue regulator — and in 2026, the regulator got stricter.

Three forces converged in the last 18 months:

1. Enterprise buyers added AI modules to their standard questionnaires. The CAIQ, SIG Lite, and most internal vendor risk templates now carry dedicated AI sections. Buyers ask about model provenance, training data rights, prompt injection defenses, hallucination controls, and ISO 42001 alignment — questions that didn’t exist in 2023.

2. Regulators caught up. The EU AI Act is enforceable. NIST released the AI Risk Management Framework. ISO/IEC 42001 — the first certifiable AI management system standard — went live in late 2023 and is now appearing as a “preferred” or “required” item in enterprise procurement. Microsoft, OpenAI, and Anthropic are already certified. Your buyer’s procurement team noticed.

3. The questionnaire surface area exploded. According to one 2026 industry analysis, the average enterprise security team faces 500+ vendor questionnaires per year, with security engineers spending 20–40 hours on each comprehensive review. On the buyer side, that’s a bottleneck. On the seller side — the side you’re on — it’s a 4-to-8-week deal stall.

Let’s price that out.

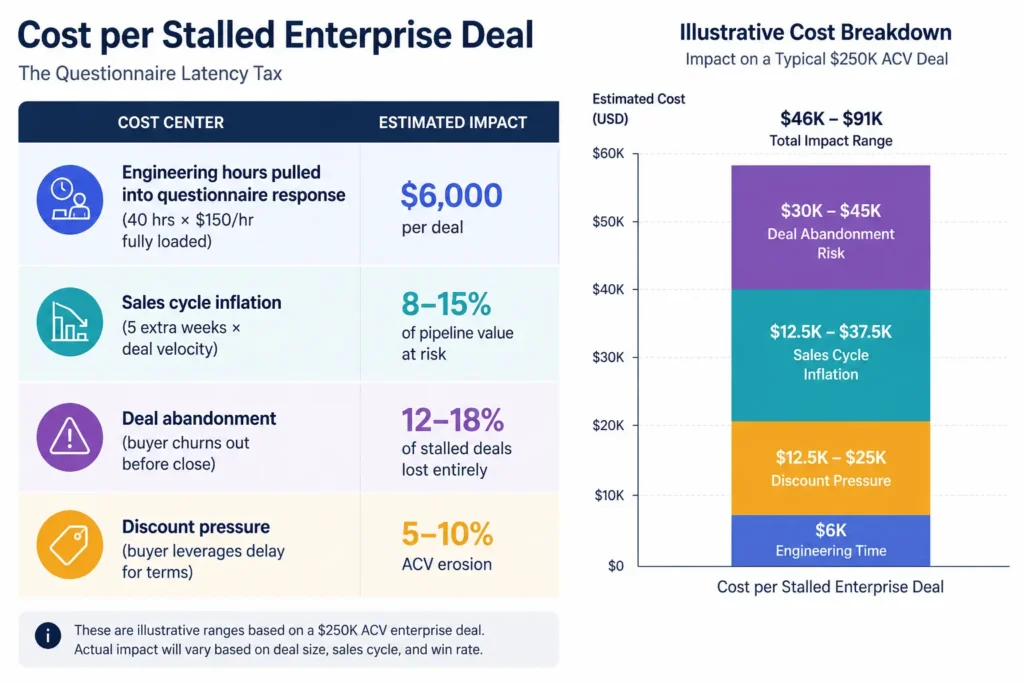

The Questionnaire Latency Tax (a metric we use with clients)

If your average enterprise deal is $250K ACV and your security review takes 6 weeks instead of 1, here’s the real cost:

For a 30-person Series B SaaS doing $5M ARR with a 60% enterprise mix, the math gets ugly fast: a single quarter of slow questionnaire turnaround can cost $400K–$800K in pushed or lost revenue. The IBM Cost of a Data Breach Report 2025 puts the global average breach cost at $4.4M, with 97% of organizations experiencing AI-related incidents lacking proper access controls — which is exactly why enterprise buyers are scared, and exactly why their questionnaires got longer.

This problem hits hardest if you’re a Series A–C AI startup selling into financial services, healthcare, or Fortune 1000 — where security review is a hard gate, not a checkbox.

The Contrarian Truth: You Can't Out-Tool This Problem

The market response to questionnaire pain is a wave of AI-powered tools that auto-fill answers from your past responses. They’re useful. They’re also a band-aid.

Here’s why: Auto-fill makes you faster at answering. It doesn’t make your answers good.

If your underlying security posture is thin — no SOC 2, no AI governance program, no penetration testing cadence, no documented model risk process — the world’s best AI questionnaire tool will autofill 312 questions full of “in progress,” “planned for Q3,” and “we have a policy [no you don’t].”

Enterprise reviewers spot that immediately. Then your deal goes to legal, and legal goes silent.

The startups that win enterprise deals on the first pass aren’t faster answerers. They’re pre-answered companies — they’ve architected the controls, evidence, and attestations into the company before any questionnaire arrives.

That’s what the AI Trust Stack does.

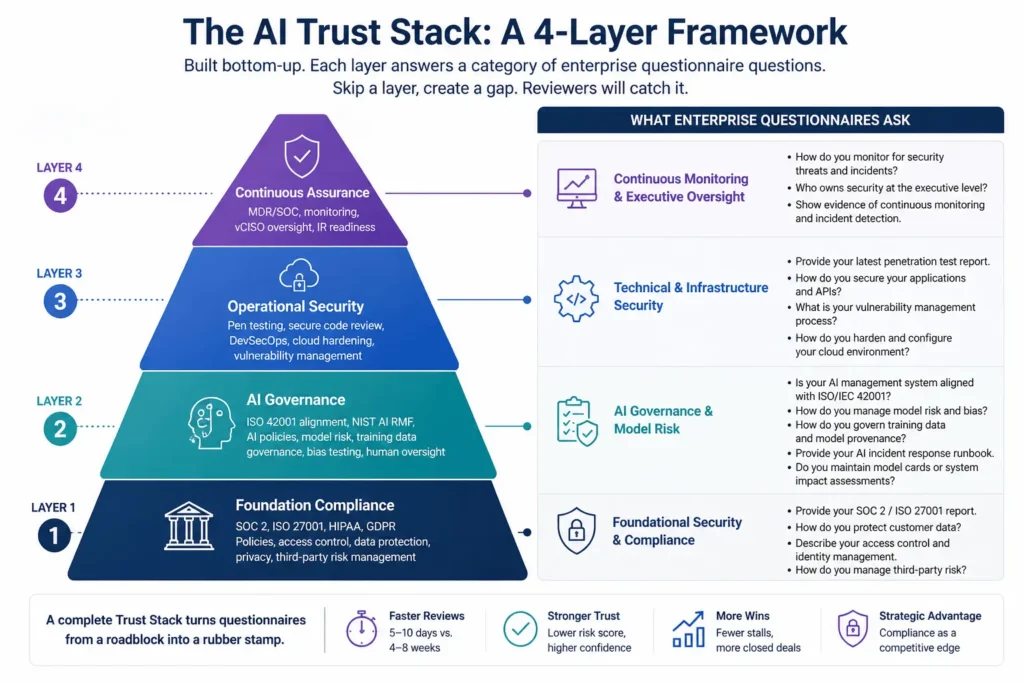

The AI Trust Stack: A 4-Layer Framework

The Trust Stack is built bottom-up. Each layer answers a category of questionnaire questions, and skipping a layer creates a gap reviewers will catch.

Layer 1: Foundation Compliance — Your Table-Stakes Attestations

What it covers: SOC 2 Type II, ISO 27001, HIPAA (if you touch PHI), GDPR (if you serve EU users). Questionnaire questions it answers: ~40–60% of any standard enterprise security questionnaire.

This is the floor. No SOC 2 = your deal goes to a higher-risk procurement track 100% of the time. ISO 27001 is a hard requirement for European enterprise buyers. HIPAA and GDPR are non-negotiable in their respective markets.

Common pitfall: Founders try to skip this layer because they think AI-specific certifications will compensate. They won’t. ISO 42001 sits on top of ISO 27001 — most auditors will tell you to do 27001 first.

SecureFLO pattern: The companies we work with that closed enterprise deals fastest had SOC 2 Type II in hand 6–9 months before they thought they’d need it. Our audit-readiness assessment maps your current state to the controls auditors actually test, not the ones the certification body markets.

Layer 2: AI Governance — Where Most Startups Are Exposed

What it covers: ISO 42001 alignment, NIST AI Risk Management Framework, internal AI usage policy, model risk register, training data governance, bias testing, human-oversight protocols. Questionnaire questions it answers: The 30–60 AI-specific questions that didn’t exist 18 months ago.

This is the layer where 2026 questionnaires are catching startups flat-footed. Buyers now ask things like:

- “Describe your AI management system. Is it aligned with ISO/IEC 42001?”

- “What is your process for evaluating model bias before and after deployment?”

- “How do you document and approve the use of third-party AI APIs internally?”

- “Provide your AI incident response runbook.”

- “Do you maintain a model card or system impact assessment for production AI features?”

If your answer is “we use OpenAI for some things and we’ll get back to you,” the deal is already cooling.

ISO 42001 covers both sides of AI exposure: how your team uses AI tools internally (Copilot, ChatGPT, custom agents) and how AI is built into your product. Most startups need to address both. The standard’s Annex A controls map cleanly to the AI-specific questions enterprise buyers are now asking — which is exactly why ISO 42001 is showing up as a “preferred vendor” criterion in procurement.

Common pitfall: Treating AI governance as a marketing page. Reviewers ask for evidence: policies dated within the last 12 months, risk assessment artifacts, documented review cycles. A blog post on your site doesn’t count.

SecureFLO pattern: This is the gap we see most often. We build AI governance programs aligned with ISO 42001 and NIST AI RMF for AI-first SaaS companies — not as a paper exercise, but as a working management system that produces real artifacts auditors and enterprise buyers will accept. Details on our AI Governance & Assessments service.

Layer 3: Operational Security — Proof Your Code and Infrastructure Hold Up

What it covers: Penetration testing (annual minimum, often quarterly for AI products), application/API security testing, secure code review, vulnerability management cadence, cloud security posture (AWS/Azure/GCP), DevSecOps integration in CI/CD. Questionnaire questions it answers: Technical depth questions and any “evidence requested” items.

This is where attestations meet reality. Enterprise reviewers know a SOC 2 report is necessary but not sufficient — they want to see your latest pen test report, your vulnerability disclosure policy, and evidence that your CI/CD pipeline catches secrets and SAST findings before deploy.

For AI startups specifically, buyers are increasingly asking about:

- Prompt injection testing for any LLM-powered feature

- Output validation controls to prevent data leakage from model responses

- API rate-limiting and abuse controls on AI endpoints

- Model serving infrastructure hardening (especially for self-hosted or fine-tuned models)

Common pitfall: Doing one pen test the week before a big deal and assuming it covers you for 18 months. Enterprise buyers in 2026 increasingly want pen tests dated within the last 6–12 months, with remediation evidence.

SecureFLO pattern: Most AI startups we work with need pen testing as a continuous service, not an annual event — particularly when they ship LLM features that change behavior with every prompt update. Our continuous testing and DevSecOps services embed security into the pipeline rather than bolting it on at the end.

Layer 4: Continuous Assurance — Proof That Security Is Live, Not Snapshot

What it covers: Managed Detection & Response (MDR) or SOC-as-a-Service, continuous compliance monitoring, executive security oversight (vCISO/Fractional CISO), incident response runbooks tested in the last 12 months. Questionnaire questions it answers: “How do you detect and respond to incidents?” + “Who owns security at the executive level?” + “Show evidence of continuous monitoring.”

This is the layer enterprise buyers increasingly probe at the end of the questionnaire — and increasingly weight heavily in their final risk score. A company with strong attestations but no live monitoring and no clear executive accountability is a company that can pass an audit and still get breached on a Wednesday afternoon.

Common pitfall: Listing “we have logging” as your incident response answer. Buyers want named tooling, named people, named runbooks, and evidence of tabletop exercises.

SecureFLO pattern: For startups that aren’t ready to hire a full-time CISO, our Fractional CISO service provides the executive accountability enterprise reviewers want to see — paired with MDR coverage for the 24/7 monitoring most startups can’t staff internally.

Mapping the Trust Stack to a Real Questionnaire

Here’s how the four layers cover a typical 312-question enterprise security questionnaire with a 40-question AI module:

| Question Category | Volume | Trust Stack Layer |

|---|---|---|

| Information security policies & governance | ~40 questions | Layer 1 (SOC 2 / ISO 27001) |

| Access control & identity management | ~35 questions | Layer 1 + Layer 3 |

| Data protection & encryption | ~30 questions | Layer 1 + Layer 3 |

| AI governance & model risk | ~40 questions | Layer 2 (ISO 42001 / NIST AI RMF) |

| Application security & secure development | ~45 questions | Layer 3 |

| Cloud & infrastructure security | ~30 questions | Layer 3 |

| Incident response & business continuity | ~30 questions | Layer 4 |

| Vendor & third-party risk | ~25 questions | Layer 1 + Layer 4 |

| Privacy & regulatory compliance | ~25 questions | Layer 1 |

| Continuous monitoring & assurance | ~12 questions | Layer 4 |

When the stack is built, the questionnaire stops being an interrogation and becomes a recital. Your team isn’t researching answers — they’re attaching pre-existing evidence.

How SecureFLO Helps AI Startups Build the Trust Stack

We work with AI startups, SaaS companies, and scale-ups in exactly this position — past Series A, selling into Fortune 1000, watching deals stall on security review. The pattern is consistent: technical teams that are excellent at building product and uneven at building the documented, audited security posture enterprise buyers now require.

Our approach is built to compress the timeline:

- Layer 1 — Compliance Readiness: We run a gap analysis against SOC 2, ISO 27001, HIPAA, or GDPR (whichever applies), then drive remediation and audit prep. Most clients reach SOC 2 Type I in 4–5 months, Type II 6–9 months after that.

- Layer 2 — AI Governance & ISO 42001: We build the AI management system — policies, risk register, model assessments, training data governance — aligned with ISO 42001 and NIST AI RMF. Whether or not you certify, the artifacts are what enterprise buyers want.

- Layer 3 — Security Testing: Penetration testing, secure code review, and DevSecOps enablement for cloud-native architectures across AWS, Azure, and GCP.

- Layer 4 — Continuous Coverage: Fractional CISO leadership, MDR for 24/7 monitoring, and continuous compliance monitoring so the stack stays valid between audit cycles.

The point isn’t to sell you four projects. It’s to sequence them in the order that unblocks the most deal value first.

Want to see what your Trust Stack looks like today? Book a free 30-minute readiness call → — we’ll map your current controls to the gaps blocking your enterprise pipeline.

The Real Business Impact: Before vs. After

The Trust Stack isn’t a security project. It’s a revenue project. Here’s the typical Before/After we see in client engagements:

Before:

- 4–8 week security review per enterprise deal

- 30–40 engineering hours pulled per questionnaire

- 1 in 6 deals stalls past the buyer’s procurement window and dies

- Buyer pushes for SLA concessions to compensate for “vendor risk”

- Cyber insurance premiums climbing with every renewal

After:

- 5–10 day security review (often a single async exchange)

- Sub-5 engineering hours per questionnaire (mostly evidence retrieval)

- Stalled-deal rate cut by 60–80%

- Enterprise procurement stops asking for risk-based discounts

- Cyber insurance underwriters apply favorable risk tier

- ISO 42001 + SOC 2 + recent pen test attestation become active sales assets, not just compliance artifacts

The shift is from security as a tax to security as a multiplier. Your security posture becomes a reason to buy, not a hurdle to clear.

FAQ

What is an AI security questionnaire?

An AI security questionnaire is a structured set of questions — typically 30–60 items embedded inside a broader enterprise security review — that evaluates how a vendor governs, builds, and operates AI systems. It covers model risk, training data, bias controls, prompt injection defenses, ISO 42001 alignment, and AI-specific incident response procedures.

How long does an enterprise security review take in 2026?

A typical enterprise security review takes 4–8 weeks for vendors without strong attestations and 5–10 business days for vendors with SOC 2 Type II, ISO 27001, recent pen test reports, and documented AI governance. The gap is widening as enterprise buyers add AI-specific modules that unprepared vendors can’t answer quickly.

Do AI startups need ISO 42001 certification?

Not always required, but increasingly preferred. ISO 42001 is the world’s first certifiable AI management system standard, and enterprise procurement teams now reference it in vendor evaluations. Even without full certification, building an ISO 42001-aligned AI management system gives you the artifacts and policies that enterprise security questionnaires demand.

What’s the difference between ISO 42001 and NIST AI RMF?

ISO 42001 is a certifiable management system standard — you can be audited and certified against it. NIST AI Risk Management Framework is a voluntary framework with practical guidance on identifying and managing AI risks. Most mature AI governance programs use NIST AI RMF as the working framework and ISO 42001 as the auditable wrapper around it. They’re complementary, not competing.

How much does it cost to prepare an AI startup for enterprise security questionnaires?

For a Series A–B AI startup starting from a low compliance baseline, building a credible AI Trust Stack typically runs $40K–$120K in the first year — covering SOC 2 Type I/II, AI governance program development, penetration testing, and either fractional CISO or MDR coverage. Compared to the revenue impact of stalled deals, the ROI is typically positive within the first 1–2 closed enterprise contracts.

Can questionnaire automation tools replace having strong controls?

No. Questionnaire automation tools (Iris, Arphie, Conveyor, Loopio) speed up answering — but they pull from your existing knowledge base. If the underlying controls aren’t there, the AI auto-fills nonsense, and enterprise reviewers catch it immediately. Build the stack first; automate the answers second.

The Bottom Line

AI security questionnaires aren’t going away — they’re getting longer, sharper, and more determinative of which AI vendors get bought.

The path forward is clear: build the Trust Stack before the questionnaires arrive, in the right sequence, with the right evidence.

Every quarter you delay is a quarter your competitors compound trust faster than you do.

Ready to stop losing deals to security review?

Book your free 30-minute AI Trust Stack readiness assessment →

We’ll map your current controls against the questions enterprise buyers are actually asking in 2026 — and give you a prioritized 90-day plan. Free, no commitment, results in 5 business days.

EXTERNAL AUTHORITY LINKS

- NIST AI Risk Management Framework → https://www.nist.gov/itl/ai-risk-management-framework

- ISO/IEC 42001:2023 Standard → https://www.iso.org/standard/42001

- IBM Cost of a Data Breach Report 2025 → https://www.ibm.com/reports/data-breach

- Gartner Top Cybersecurity Trends 2026 → https://www.gartner.com/en/articles/top-cybersecurity-trends-2026